Abstract

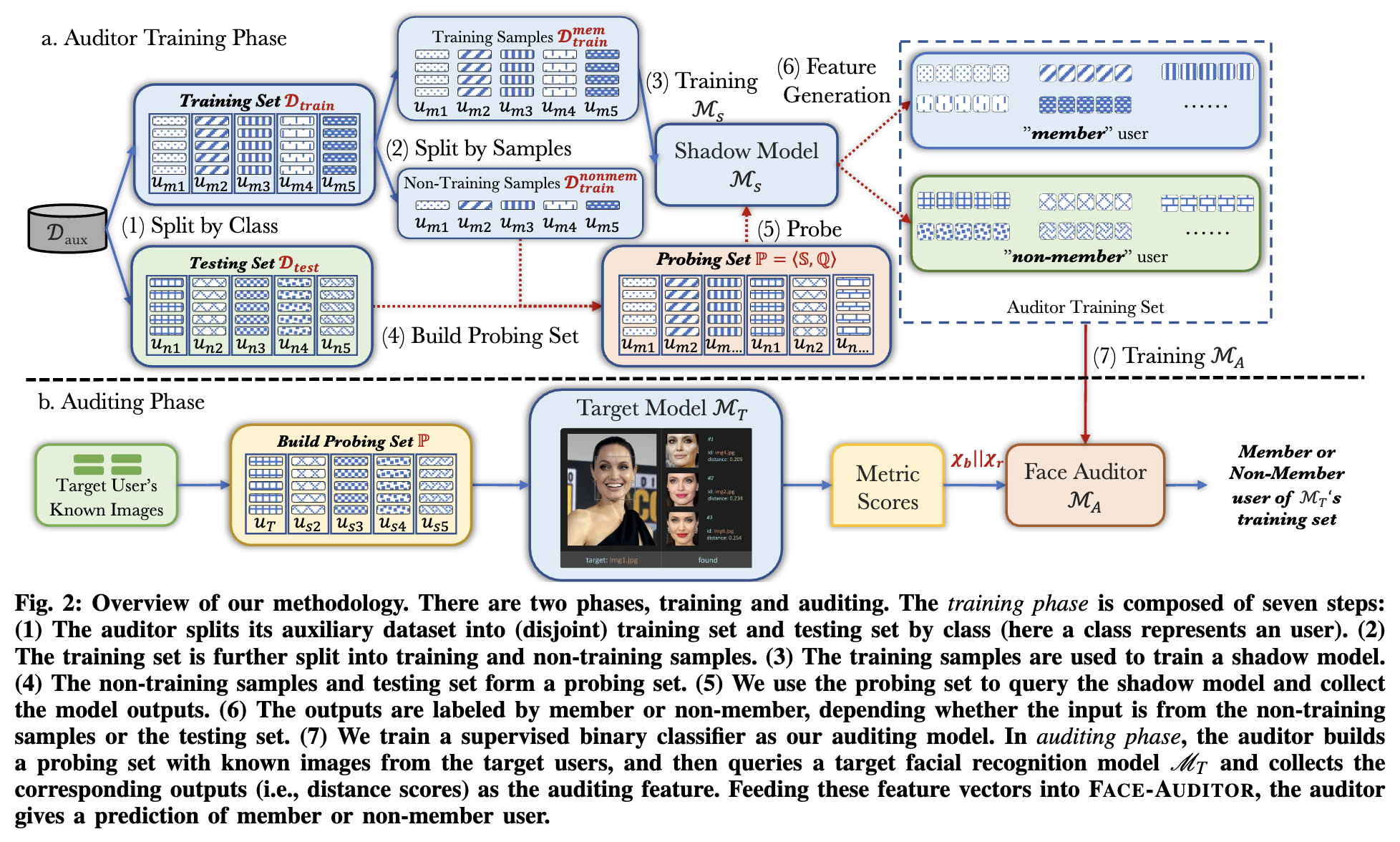

Few-shot-based facial recognition systems have gained increasing attention due to their scalability and ability to work with a few face images. However, the power of facial recognition systems enables anyone with moderate resources to canvas the Internet and build well-performed facial recognition models without people's awareness and consent. To prevent the face images from being misused, one straightforward approach is to modify the raw face images before sharing them, which inevitably destroys the semantic information and is still prone to adaptive attacks. In this paper, we take a different angle by advocating an auditing approach that enables normal users to detect whether their private face images are used to train a facial recognition system, which enables individual users to claim the proprietary of their face images. We formulate the auditing process as a user-level membership inference problem, and propose a complete toolkit FACE-AUDITOR that can carefully choose the probing set to query the few-shot-based facial recognition model and determine whether any of a user's face images is used in training the model. We further propose to use the similarity scores between the original face images as reference information to improve the auditing performance. Extensive experiments on multiple real-world face image datasets show that FACE-AUDITOR can achieve auditing accuracy of up to $99%$. Finally, we show that FACE-AUDITOR is robust in the presence of several perturbation mechanisms to the training images or the target models.

Citation

@inproceedings{CZWBZ23,

author = {Min Chen and Zhikun Zhang and Tianhao Wang and Michael Backes and Yang Zhang},

title = {{FACE-AUDITOR: Data Auditing in Facial Recognition Systems}},

booktitle = {{USENIX Security}},

publisher = {USENIX Association},

year = {2023},

}